[🔥Hot Takes] Deep learning outperforms linear regression for causal inference and tabular data??

It's a deep debate.

The paper that I just read suggests that DL can outperform linear regression for causal inference matters. Of course I don’t put that paper until the end of this article because I’m like a grocery store that puts milk a mile a way from the cash register. While you’re trudging to through the aisles of this email, please enjoy some tweets:

Hot Takes

First, a word from Jeremy Howard, multiple Kaggle Grandmaster and FastAI creator (June 2021):

This Elvis person seems conflicted, because at first Elvis declares the promise of DL (Oct 2021):

Then this drop in Apr 2022:

How should I interpret this paper in light of the first tweet?

In Nov 2022, some fancy XGBoost influencers get in the mix:

with this reply from Bojun:

I mean, I look to these people to just tell me what to think. Why can’t they agree?

Finally, a paper

Here’s a paper “Evaluating Uses of Deep Learning Methods for Causal Inference” which concludes DL can outperform LR in simulations:

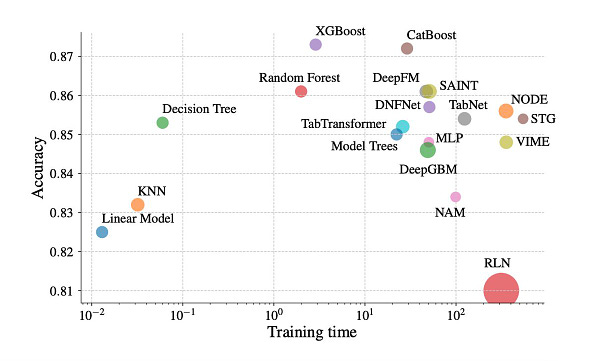

Logistic regression (LR) is a popular method that is used for estimating causal effects in observational studies using propensity scores. We examine the use of deep learning models such as the deep neural network (DNN), PropensityNet (PN), convolutional neural network (CNN), and convolutional neural network-long short-term memory network (CNN-LSTM) to estimate propensity scores and evaluate causal inference. We conducted studies using simulated data with different sample sizes (N = 500, N = 1000, N = 2000), 15 covariates, a continuous outcome and a binary exposure. These data were used in seven scenarios that were different in the degree of nonlinearity and nonadditivity associations between the exposure and covariates. Estimation of propensity scores was considered a classification task and performance metrics that included classification accuracy, receiver operating characteristic curve area under the curve (AUCROC), covariate balance, standard error, absolute bias, and the 95% confidence interval coverage were evaluated for each model. Our simulation results show that deep learning models (CNN, DNN, and CNN-LSTM) outperformed LR in the estimation of the propensity score. CNN and CNN-LSTM achieved good results for covariate balance, classification accuracy, AUCROC, and Cohen’s Kappa. Although LR provided substantially better bias reduction, it produced subpar performance based on classification accuracy, AUCROC, Cohen’s Kappa, and 95% confidence interval coverage compared to the deep learning models. The results suggest that deep learning methods, especially CNN, may be useful for estimating propensity scores that are used to estimate causal effects.

Note: I’m note sure why they didn’t try XGBoost. That’s like assessing your stock returns portfolio without referencing the S&P. Sure, you got a 15% return, but the S&P got a 20%…sooooo…

Also, I take gripe when people don’t do good feature engineering. It’s not hard to do good feature engineering. And of course XGBoost/DNN may outperform a basic LR because they don’t give the LR the love it requires.

Lastly, this is a simulation. Why don’t they just use real data?? I haven’t simulated data since college, because nobody should care about theoretical approximations when the real data is right in front of you. Either it outperforms or it doesn’t. No theory needed.

Till the next paper/tweet…